Facets are used with the ContentSearch API to enable filtering and grouping of search results based on specific fields or properties. Facets help users to narrow down search results based on their preferences or criteria. Here’s a guide on how to use facets with ContentSearch in Sitecore

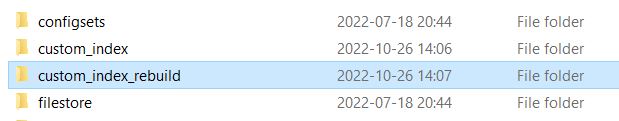

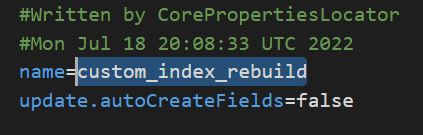

Define the facet field in your search index configuration:

First, ensure that the field you want to use as a facet is included in your search index configuration. For example, if you want to use the “Manufacturer” field as a facet, you should have it defined in your search index configuration.

Create a model class for your search result items:

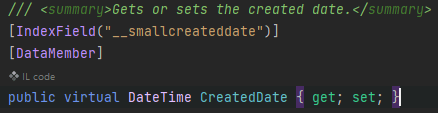

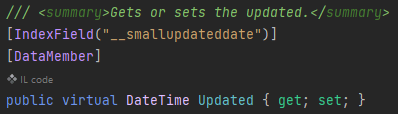

Create a model class that inherits from SearchResultItem and includes the properties you want to use as facets. I would recommend putting the IndexField name in a constant file to reuse everywhere in your solution. Because if it’s every changed you only need to change on 1 place and it won’t break the code. For example:

public class CustomSearchResultItem : SearchResultItem

{

[IndexField("manufacturer")] //Or use a constant in your foundation project

public string Manufacturer{ get; set; }

}Perform a faceted search:

To perform a faceted search, use the .FacetOn() method in your search query. This method tells the search provider to calculate the count of items for each unique value in the specified field.

Here’s an example of performing a faceted search on the “manufacturer” field:

using (var context = ContentSearchManager.GetIndex("sitecore_web_index").CreateSearchContext())

{

// Create the base search query

IQueryable<CustomSearchResultItem> query = context.GetQueryable<CustomSearchResultItem>()

.Where(item => item.TemplateName == "YourTemplateName");

// Add the facet to the query

query = query.FacetOn(item => item.Manufacturer);

// Execute the search and get the facets

var searchResults = query.GetResults();

var manufacturerFacetResults = searchResults.Facets.Categories.FirstOrDefault(x => x.Name == "manufacturer");

}

Process the facet results:

In the example above, the manufacturerFacetResults variable contains the facet results for the “manufacturer” field. You can now process these results to display the facet options to the users or apply further filtering based on user input.

Here’s an example of processing the facet results and displaying them as a list of options:

if (manufacturerFacetResults != null)

{

foreach (var facetValue in manufacturerFacetResults.Values)

{

string manufacturer = facetValue.Name;

int count = facetValue.AggregateCount;

}

}

Apply facet filters to the search query:

Based on user input, you can apply facet filters to the search query to narrow down the search results. For example, if a user selects a specific manufacturer from the facet options, you can add a filter to the search query:

string selectedManufacturer = "example";

using (var context = ContentSearchManager.GetIndex("sitecore_web_index").CreateSearchContext())

{

IQueryable<CustomSearchResultItem> query = context.GetQueryable<CustomSearchResultItem>()

.Where(item => item.TemplateName == "YourTemplateName")

.Where(item => item.Manufacturer == selectedManufacturer)

.FacetOn(item => item.Manufacturer);

var searchResults = query.GetResults();

}I hope you found this helpful. 🙂